“Google plans to use artificial intelligence technology, including the Gemini AI model and large language models (LLMs), such as those used in Project Ellmann, to create a comprehensive view of users’ lives. The Gemini model, known for its multimodal capabilities, can process text, image, video, and audio. Project Ellmann aims to be a ‘Life Story Teller’ by ingesting search results, analyzing patterns in photos, and answering questions.”

“Google is planning to use artificial intelligence technology to create a bird’s-eye view of users lives. For this, a team as the tech giant has been proposed using mobile phone data like photographs anf searches to tak about user’s lives. The new project, dubbed ‘Project Ellmann’ is named after biographer and literary critics Richard David Ellmann.”

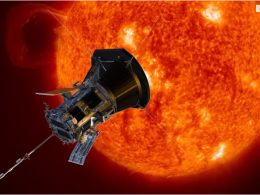

“Project Ellmann is one of many ways Google is planning to create or improve its products with AI technology. Just last week, Google launched its ‘most capable’ and advanced AI model, Gemini. In some cases, the AI model even outperformed Open AI’s GPT-4.”

“The company is planning to license Gemini to a wide range of customers through Google Cloud. Google wants to access the model in their own apps. One of the Gemini model’s key highlights is its multimodal capabilities. This means that Gemini can process and understand information beyond text, which includes images, video, and audio.”

“At a recent internal summit, a product manager for Google Photos presented Project Ellmann alongside Gemini teams. They wrote that the teams spent the past few months determining that large language models are the ideal tech to make this bird’s eye approach to one’s life story a reality.”